What Is the Best Activation Function in Neural Networks

ReLUx max0x So if the input is negative the output of ReLU is 0 and for positive values it is x. The ReLU is the most used activation function in the world right now.

Neural Network Activation Function Develop Paper

But in my experience relu works better with more complicated models.

. The Sigmoid Function curve looks like a S-shape. But being perfect does not mean it is the best activation function. The biggest advantage of the tanh function is that it produces a zero-centered output thereby supporting the backpropagation process.

Rectified Linear Unit or commonly know as ReLU ReLU z max 0 z is perhaps one of the best known practical activation functions. For other layers it is hard to tell sigmoid or relu is better. This may seem surprising at first as so far the very nonlinear functions seem to work better.

Sigmoid or Logistic Activation Function. ReLU is the most commonly used activation function in neural networks especially in CNNs. The benefit of the rectifier actually comes later in the back propagation.

Non Linear Neural Network. Tanh Function Hyperbolic Tangent. The input is a real value and the output resides between 0.

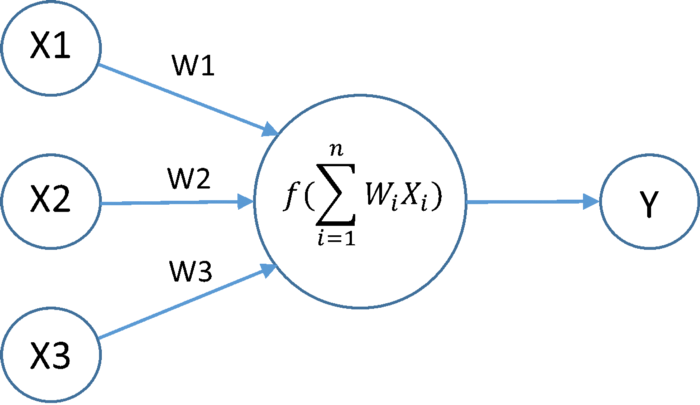

This activation function very basic and it comes to mind every time. 10 Non-Linear Neural Networks Activation Functions Sigmoid Logistic Activation Function. The function is attached to each neuron in the network and determines whether it should be activated fired or not based on whether each neurons input is.

If you are unsure what activation function to use in your network ReLU is usually a good first choice. ReLU when training use this in the hidden layers you need the x 0 value so reLU takes this value. So whats the problem with sigmoid function.

ReLU is the most commonly used activation function in neural networks and The mathematical equation for ReLU is. Relu vs Sigmoid As you can see the ReLU is. One of the main reasons for putting so much effort into Artificial Neural Networks ANNs is to replicate the functionality of the human brain the real neural networks.

The rectified linear activation function or ReLU activation function is perhaps the most common function used for hidden layers. It is a simple straight line activation function where our function is. In neural networks RELU is a widely used activation function.

It helps to diminish the possibility of vanishing gradient. It is common because it is both simple to implement and effective at overcoming the limitations of other previously popular activation functions such as Sigmoid and Tanh. The reasons are as follows.

The Rectified Linear Unit ReLU activation function is the most popular and successful function used in deep learning. The binary step function was one of the first activation functions to be used in a neural network. It is the perfect activation function we are looking for which is both nonlinear and has a continuous derivative.

Relu max 0x is used to extract feature maps from data. 1 Answer Active Oldest Votes 0 Ill answer to the best of my ability the 2 first questions. Since it is used in almost all the convolutional neural networks or deep learning.

Linear Linear is the most basic activation function which implies proportional to the input. Although there is a massive problem. This function takes any real value as input and outputs values in the range of 0.

Its actually mathematically shifted version of the sigmoid function. If we look carefully at the graph towards the ends of the function y. Neural networks classify data that is not linearly separable by transforming data using some nonlinear function or our activation function so the resulting transformed points become linearly separable.

Activation functions are mathematical equations that determine the output of a neural network. ReLU is the most commonly used activation function in neural networks especially in CNNs. Tanh or hyperbolic tangent Activation Function.

Share Improve this answer. ReLU stands for rectified linear unit and is a type of activation function. The sigmoid function is the most frequently used activation function but there are many other and more efficient alternatives.

Tanh Function - The activation that works almost always better than sigmoid function is Tanh function also knows as Tangent Hyperbolic function. In this article Ill discuss the various types of activation functions present in a neural network. Sigmoid tanh Softmax ReLU Leaky ReLU EXPLAINED.

Mathematically it is defined as y max 0 x. This is why it is used in the hidden layers where were learning what important characteristics or features the data holds that could make the model learn how to classify for example. It is also a faster activation function due to its simple function as compared to sigmoid.

Firstly for the last layer of binary classification the activation function is normally softmax if you define the last layer with 2 nodes or sigmoid if the last layer has 1 node. Activation function of ReLU ReLu activation function Learn the Activation Function on YouTube Thats all I have right now with me I hope youve learned the basic things about Activation Functions and why it is so important to use it on Neural networks. Tanh function is very similar to the.

I suggest look at reLU softmax is as well used however you get better results in practice with reLU. By using RELU all the negative values of. Binary Step Neural Network Activation Function.

Types of Activation Functions A. If possible the hyertangent function is selected as activation function. When working with neural networks you want this function because it keeps the non linearity of course this in the output layer.

Equation Y az which is similar to the equation of a straight line. I Including its differential are smooth nonlinearities. It makes signal processing better.

Linear Neural Network Activation Function. The main reason why we use. Gives a range of activations from -inf to inf.

The tanh function is much more extensively used than the sigmoid function since it delivers better training performance for multilayer neural networks. The range of the tanh function is from -1 to 1.

12 Types Of Neural Networks Activation Functions How To Choose

12 Types Of Neural Networks Activation Functions How To Choose

Neural Network Activation Function By Arshad Alisha Sd Analytics Vidhya Medium

Comments

Post a Comment